"User-Agent": "Mozilla/5.0 (Macintosh Intel Mac OS X 10_15) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/.70 Safari/537.36" That was probably more generous that it needed to be, but I wanted the script to run unattended so I erred on the side of caution. Additionally, I put a random delay ~1 second between requests so it didn’t hammer the server. First I rotated the User Agent headers to avoid fingerprinting and I also used a VPN proxy to request from different IP addresses. I realized there might be circuit breaker and automated filtering on the server, so I took steps to avoid hitting those.

There are many tutorials for web scraping out there, so I won’t get too detailed here.

I used Python with the Requests and Beautiful Soup libraries to craft the scraping code. Everything I did after that was as a logged in user, so nothing too sneaky here. It was worth the price and I got a subscription to the magazine itself. The first thing I did was pay for access to the archive. Scraping with Python into an SQLite3 database Some basic web scraping could yield the data I needed. I went back to the ArtForum website and found the archives were complete back to 1963 and presented with a very consistent template system. Art criticism was still a big deal back then and taken very seriously. I was, and still am, interested in the art criticism of the 60s and 70s because so much of it came from disciplined and strong voices. A few years ago I had bought a subscription to ArtForum magazine in order to read through the archives. In learning to use that notebook I realized I needed a large dataset to train the GPT-2 language model in the kind of writing I wanted it to produce. That’s a big deal because online GPU resources can be expensive and complicated to set up.

#Finetune gpt2 free

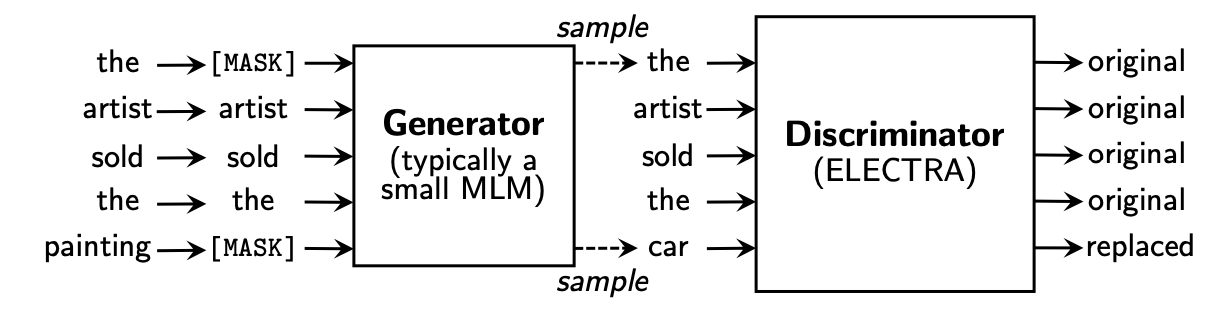

I discovered that Google Collab is not only a free service, it allows use of attached GPU contexts for use in scripts. Somebody recommended a Google Collab notebook by Max Woolf that simplified the training process. The breakthrough came while I was digging around the community for tutorials that focused on deploying language models. Others may find it valuable if lost in that rabbit hole. Nope.įWIW, I did come up with a Gist that got it very close to a ML configuration. I was asking much more from it than the design intent, but was hoping it was hackable. Specifically, 4gb of RAM isn’t enough to load even basic models for manipulation. Getting that little GPU machine running with modern PyTorch and Tensorflow was a huge ordeal and it is just too under-powered. I pulled it back out and started from scratch using recent library updates from NVIDIA. I had spent a few months trying to get that up and running but got frustrated with dependency hell. My first impulse was to make use of an NVIDIA Jetson Nano that I had bought to do GAN video production. I also wanted a tool that would yield language specific to art and culture.

#Finetune gpt2 generator

I looked around for an online text generator that lived up to the AI hype, but they were mostly academic or limited demos. I recently published a book of computer generated photographs and wanted to also generate the introductory text for it.

If you are looking for a concise tech explainer, try this post instead. I included many details that were part of the decision process at each phase.